ChatGPT makes money by converting its ~700M monthly active free-tier users into $20/mo Plus, $200/mo Pro, and $25–50+/seat organizational subscribers, while simultaneously selling developers per-token API access and signing publishers into content-licensing deals. In 2026 total revenue runs ~$12B annualized; subscriptions lead, the API is second, enterprise is compounding fastest, and advertising — once off-limits — is now live and scaling because the unit economics of the free tier require a second revenue engine.

How Does ChatGPT Make Money? — 2026 Guide | Thrad

ChatGPT is the most valuable consumer product OpenAI ships, and its economics are finally public enough to examine end-to-end. Four revenue streams dominate in 2026 — consumer subscriptions, the API, enterprise licenses, and a fast-growing tier of advertising and partnership deals. This is the funnel-level narrative of how the business actually works and why the mix is shifting.

Date Published

Date Modified

Category

Advertising AI

Keyword

how does chatgpt make money

Start monetizing your AI app in under an hour

With Thrad, publishers go from first API call to live ads in less than 60 minutes. With fewer than 10 lines of code required, Thrad makes it easy to unlock revenue from your conversational traffic the same day.

ChatGPT makes money through four main revenue streams in 2026: consumer subscriptions, usage-based API access, enterprise and team seat licenses, and a fast-growing layer of advertising, search, and licensing deals. Each stream serves a different audience and carries a different margin profile, and the balance between them is shifting quickly as free-tier usage outpaces paid conversion. This is the narrative version of the business — the funnel view of how money flows into OpenAI, why each stream exists, and what forces are reshaping the mix. If you want the line-by-line structural teardown, read the revenue-streams-breakdown piece; this one tells the story.

At Q1 2026, OpenAI's total revenue runs approximately $12 billion annualized, up from $4B at Q1 2024 — a tripling in two years. The rate of change, not just the level, is what tells you why ads and licensing have moved from "maybe someday" to "live and scaling."

What is ChatGPT's business model?

ChatGPT is a two-sided monetization engine with a third layer starting to form on top. On the consumer side, it charges end users directly — $20/mo for ChatGPT Plus, $200/mo for ChatGPT Pro, plus Team ($25–30/seat) and Enterprise ($50+/seat) tiers for organizations — for a polished product experience on top of the free tier. On the developer side, it charges businesses that build on top of the same models through the OpenAI API, priced per million tokens of input and output. On top of both, a newer third layer monetizes free-tier users through paid search placements, licensing deals with publishers, and partnership revenue that bundles distribution with compute credits.

The business model's defining characteristic is the free tier. Roughly 700 million monthly active users touch ChatGPT without paying, and that free usage is simultaneously OpenAI's greatest asset and its biggest cost center. The asset: unprecedented distribution, training-data signal, and brand gravity. The cost: billions of dollars per year in compute that subscription conversion alone can't cover. The entire revenue architecture is shaped by the need to monetize that free tier without destroying the product experience that makes it valuable.

What are ChatGPT's four revenue streams?

Consumer subscriptions (ChatGPT Plus and Pro)

This is the line most users see. $20/mo Plus unlocks GPT-4o and higher rate limits, image generation, file uploads, and custom GPTs; $200/mo Pro adds Pro-only models, deep reasoning modes (o1-pro, o3-deep), video features, Advanced Voice with longer session length, and Operator agent access. Plus is the workhorse — an estimated 15–17 million subscribers and ~$3.6–4.0B annualized. Pro is small in count (~250–300k subscribers) but contributes disproportionately per user at $2,400/year versus $240 for Plus, generating roughly $600–720M annualized.

Together, consumer subscriptions account for approximately $4.3B of the $12B total, or 36% of OpenAI's revenue. They are the most visible line and the first place most people think of when asked how ChatGPT makes money. They're also the line with the slowest growth — Plus is decelerating as the addressable market saturates, and only Pro is still compounding at rates that look like 2023-era AI growth.

API access (pay-per-token)

Every time a developer calls GPT-4o, GPT-5, o1, o3-mini, or a specialized endpoint (embeddings, realtime audio, image generation, Whisper), OpenAI meters the tokens consumed and bills the account. Pricing varies by model class — GPT-4o sits at $2.50 input / $10 output per million tokens, GPT-5 sits above that, and embeddings and audio have their own schedules. Cached prompts get ~50% off; batch calls get deeper discounts.

At roughly $2.8–3.2B annualized, the API is the second-largest line and the one most exposed to the broader AI ecosystem. It powers coding assistants (Cursor, Windsurf, Cognition's Devin), customer- support platforms (Intercom Fin, Sierra, Decagon), content pipelines, voice-agent products, and thousands of smaller AI-native startups — Stripe's State of AI Monetization 2026 puts the population at more than 50,000 AI-native businesses running meaningful API traffic. Token volume is growing roughly 3× year-over-year while per-token prices have fallen ~45%, which produces net revenue growth of ~60% — fast, but slower than the usage chart would suggest.

Team and Enterprise seats

Team plans start around $25–30 per seat per month with shared workspaces, admin controls, and a contractual no-training-on-data guarantee. Enterprise plans are negotiated, typically $50+/seat with effective pricing after volume discounts closer to $38–45, and add SSO, SCIM, SOC 2 Type II audit coverage, data-retention controls, training opt-out, higher rate limits, longer context windows, and a named account team.

At an estimated 1.5M Team seats ($450–540M annualized) and 3.5–4M Enterprise seats ($1.8–2.0B annualized), the organizational tiers together produce ~$2.4B annualized, compounding at ~80% year-over- year. Enterprise is OpenAI's margin-heaviest line — customers pay for governance and predictability, not raw compute — and it's the line most analysts now expect to overtake consumer subscriptions by late 2027 on current trajectory.

Advertising, search, and licensing

This is the newest stream and the one changing fastest. In 2026 OpenAI is running paid search placements inside ChatGPT's search surface, has signed content-licensing deals with major publishers (News Corp, Axel Springer, the Financial Times, Condé Nast, Dotdash Meredith, Associated Press, Reuters, Vox Media, and more than a dozen others) that combine upfront payments with revenue share, and has begun experimenting with sponsored product suggestions inside shopping-oriented prompts.

Combined, this layer produces an estimated $500–700M annualized at Q1 2026 — small against the $12B total but growing at a rate (tripling quarter-over-quarter in the ads subset) that makes it the fastest-moving part of the revenue picture. eMarketer projects generative-search ad spend at $8–12B industry-wide by 2028, with OpenAI capturing a disproportionate share given ChatGPT's ~50% share of assistant traffic.

Stream | Est. 2026 annualized | Share of total | Growth trajectory |

|---|---|---|---|

Consumer subs (Plus + Pro) | ~$4.3B | ~36% | Decelerating to ~20% YoY |

OpenAI API | ~$3B | ~25% | ~60% YoY (volume 3×, price down 45%) |

Team + Enterprise | ~$2.4B | ~20% | ~80% YoY compounding |

Ads + licensing | ~$500–700M | ~5% | >150% YoY, fastest growing |

Other/unattributed | ~$1.4B | ~14% | Partnership deals, compute reseller |

Figures are directional and synthesized from The Information, Bloomberg, OpenAI's own disclosures, and third-party estimates.

ChatGPT's free tier is the largest distribution footprint on the open web — roughly 700 million monthly active users, comparable to a medium-sized social network. The free tier can only exist long- term if something else pays for its compute bill. In 2026, that "something else" became advertising plus licensed content deals — not because OpenAI wanted to, but because the math required it.

Why is advertising no longer optional?

The structural issue: free-tier usage is enormous, conversion to paid is low single digits, and inference costs — while falling per token — are not falling fast enough to cover the unit economics of free users at scale. Working through the math: 700M monthly active users × an average of a few free queries per session × GPU cost per query × multiple sessions per month produces a compute bill that dwarfs any subscription line's contribution margin.

Even optimistic assumptions — a doubling of conversion rate to 5%, a halving of per-query compute cost — don't close the gap. Free-tier compute cost for a mature consumer assistant at this scale requires a second revenue engine, and advertising is the default answer because it monetizes the specific usage that subscriptions can't reach. Three practical consequences shipping through 2026 and 2027:

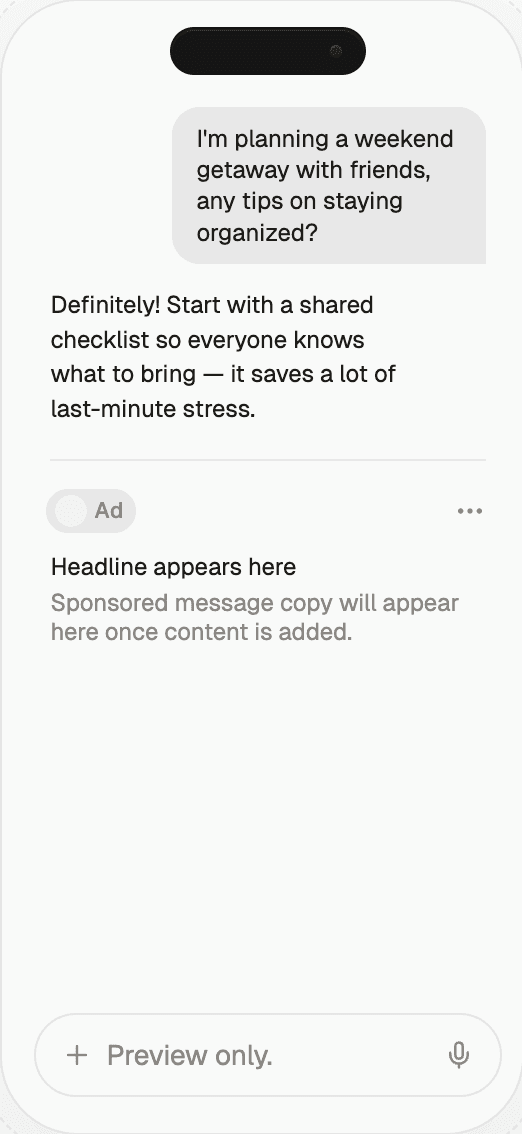

Paid search in ChatGPT — clearly-labeled sponsored results

appear when a user prompt has commercial intent, in a format that

looks more like Google Shopping ads than search ads and is

inventory-capped at one to three positions per query to protect

the answer experience. For brands, the direct way to participate is through a marketplace like Thrad that handles advertising on ChatGPT.Content-licensing deals — publishers get paid for their content

being used in answers; in return OpenAI gets cleaner training data,

retrieval-time access, and attribution rights. The upfront deal

structure produces lumpy revenue but builds a durable citation

surface.Shopping partnerships — early pilots with retailers and

affiliate networks let ChatGPT surface product recommendations

where the merchant pays per click or per conversion, effectively

making ChatGPT a commerce rail alongside Amazon and Google

Shopping. The underlying plumbing — how the placement decision gets made and who gets paid — is the same marketplace architecture used by Thrad's AI ad network.

None of this replaces the subscription business. All of it is additive — and the additive layer is where the next few billion of ARR is coming from.

How does the free-to-paid conversion funnel actually work?

ChatGPT's monetization funnel has three distinct conversion stages, each with its own mechanics. The first: free to Plus. Most free users hit rate limits on GPT-4o (number of messages per time window) or find a specific unlocked feature (image generation, custom GPTs, file uploads) compelling enough to justify $20/month. Conversion runs roughly 2–3% of monthly active free users into paying Plus subscribers, which on 700M MAU is 14–21M paying — consistent with the estimated Plus subscriber count.

The second stage: Plus to higher tiers. A minority of Plus users upgrade to Pro ($200/mo) for deeper reasoning and Operator access. Another meaningful share consolidates into Team workspaces as their work teams adopt ChatGPT collectively. The specific trigger for Team consolidation is usually a shared project or a legal/compliance requirement that Plus's consumer-grade terms can't satisfy.

The third stage: Team to Enterprise. Around 50–100 seats in a single organization, IT/security typically requires SSO, SCIM, and data residency controls that Team doesn't offer, and the account moves to Enterprise at renewal. This stage is the highest-value conversion — a Team-to-Enterprise migration can 10× the account revenue — and the one OpenAI's finance team almost certainly optimizes for.

Funnel stage | Typical trigger | Revenue multiplier | Conversion rate |

|---|---|---|---|

Free → Plus | Rate limits / unlocked features | ~∞ (from $0) | ~2–3% of MAU |

Plus → Pro | Deep-reasoning / Operator need | 10× ($20 → $200) | <5% of Plus |

Plus → Team | Work-team consolidation | 1.25–1.5× per seat | ~0.5–1% of Plus users annually |

Team → Enterprise | IT security requirements | 1.7–2× per seat + volume | ~20–30% of Team orgs 50+ seats |

The funnel mechanics explain why OpenAI invests so heavily in workspace features, admin tooling, and compliance certifications — these are the rails that move high-revenue conversions through the later stages of the funnel.

How does ChatGPT compare to Google's revenue model?

Google Search is almost entirely ad-funded and generates roughly $250B+ annually at high operating margins — a 20-year-mature business. ChatGPT is primarily subscription-funded at ~$12B annualized with ads as a growing supplement, and is pre-profitability at the company level. The two models are converging from opposite directions: Google is adding Gemini-based generative answers (which compress ad inventory per query and force new ad formats inside the answer); OpenAI is adding ad placements to a historically ad-free surface.

Neither model has stabilized. The 2026 mix is a snapshot of a moving target, and the most likely 2028 picture is both products looking more similar than they do today — generative answers with inline ads, sponsored citations, and commerce rails. Revenue scale will remain an order of magnitude apart through 2027, but the shape of the revenue will converge.

Google Search at ~$250B annually versus ChatGPT at ~$12B: the ratio is ~20×. In 2022 the ratio was approximately infinity because ChatGPT had no revenue. The compression has happened fast enough that Wall Street now treats ChatGPT as a structural threat to Google search monetization even though the absolute dollar gap is still wide. What Wall Street is pricing is the rate of change, not the level.

What are the common misconceptions about ChatGPT's monetization?

"ChatGPT is profitable because it charges $20/mo." No — gross

margin per Plus subscriber is positive at the unit level, but

company-wide ChatGPT is not profitable once training compute (a

GPT-5 training run reportedly cost $1B+), free-tier serving, and

R&D are loaded in. Profitability is a 2027–2028 story on current

trajectory."Ads will ruin ChatGPT's UX." The current design treats ads as

a search-intent-only placement with strong labeling, inventory caps

of 1–3 positions per query, and no ads on non-commercial prompts.

It's more like Google's shopping ads than YouTube's mid-roll —

designed to minimize disruption to information queries. Sub

retention in the ads-live cohort has not measurably changed."OpenAI doesn't need advertising because Microsoft invests in

them." Microsoft's investments fund compute capacity (via Azure

credits) and are largely one-way in accounting terms, not two-way.

Operating expenses at the ChatGPT product level — support, product

engineering, free-tier compute at scale — still need to be covered

by revenue. Microsoft's investment does not pay OpenAI's AWS bill

(or the Azure equivalent)."The API will kill subscriptions." The data doesn't support

it. A minority of Plus users ever touch the API, and developers

build on the API precisely because the consumer product validates

that OpenAI is the default. The two lines reinforce each other

more than they cannibalize."ChatGPT's revenue is mostly recurring." Not cleanly.

Subscriptions (recurring), API (usage-based, not strictly

recurring), Enterprise (contractual, multi-year, effectively

recurring), licensing (lumpy, upfront-heavy), ads (auction-driven,

not recurring at all). The "ARR" framing that works for SaaS

partially breaks down at OpenAI's mix."Free users are worthless." They are the training-data pipeline,

the brand-awareness engine, and — increasingly — the advertising

audience. Free users cost money in compute, but the strategic value

they create is the single biggest asset OpenAI has.

What comes next for ChatGPT's revenue mix?

Expect the advertising line to grow fastest through 2026 and into 2027, enterprise to compound steadily at ~80% YoY, API to grow with the AI-native app ecosystem but with margin compression continuing, and consumer subscriptions to plateau as the addressable consumer market saturates. The 2027 revenue mix will likely show subscriptions below 30% of total for the first time since 2023, with enterprise approaching 30%, ads and licensing together above 10%, and API holding steady around 25%.

The most important question for brands in 2026 isn't whether ChatGPT will have ads — that's settled — but what form those ads take, which queries they appear on, and how to show up in them with integrity. Three specific 2026 signals worth tracking:

Whether advertising crosses 5% of total revenue, which would

make it a board-level strategic priority rather than a product

experiment.Whether Enterprise ARR exceeds consumer subscription ARR,

which would flip the analyst narrative from "consumer AI" to

"enterprise AI."Whether a single licensing deal exceeds $1B in committed

value, which would signal publishers see OpenAI as a durable

distribution channel rather than a training-data licensee.

Any of these unlocks changes the story. All three together would fundamentally reshape how the market values OpenAI.

How to act on this as a brand

If your team is thinking about showing up inside generative surfaces — ChatGPT, Perplexity, Copilot, and the rest — the first step is audit: understand which commercial queries in your category already pull generative answers, how brands currently appear in those answers, and what a paid or licensed placement would look like at today's inventory structure.

Two workstreams usually follow. First, organic citation presence: content that's structured for retrieval-augmented generation, with clear attribution markers, stable URLs, and authoritative third-party citations that AI apps will surface as sources. Second, paid placement readiness: measurement infrastructure for generative- surface visibility, an understanding of which commercial-intent prompts in your category trigger sponsored placements, and creative/copy assets designed for the format constraints of inline answer-adjacent ads.

That's the gap Thrad helps close for brands navigating the new generative-advertising stack — measurement and placement tooling calibrated for ChatGPT and the broader assistant surface, with the same rigor buyers already apply to search and social today.

openai revenue, chatgpt business model, chatgpt monetization, openai profitability

Citations:

OpenAI, "ChatGPT Plan Comparison — Free, Plus, Pro, Team, Enterprise," 2026. https://openai.com/chatgpt

The Information, "OpenAI revenue hits $12B annualized in Q1 2026," 2026. https://theinformation.com

IAB Tech Lab, "State of Data 2026: Post-Cookie Targeting Landscape," 2026. https://iabtechlab.com

Reuters, "OpenAI-News Corp licensing deal details," 2025. https://reuters.com

The Verge, "OpenAI begins testing paid search placements in ChatGPT," 2026. https://theverge.com

eMarketer, "Generative Search Ad Spending Forecast 2026–2028," 2026. https://emarketer.com

Be present when decisions are made

Traditional media captures attention.

Conversational media captures intent.

With Thrad, your brand reaches users in their deepest moments of research, evaluation, and purchase consideration — when influence matters most.