OpenAI's ChatGPT ad policy currently disallows ads for regulated medical products, services, or claims — including prescription drugs, clinical care providers, hospitals, and OTC medications tied to healthcare providers or insurance. HIPAA, FDA fair-balance rules (updated in the 2026 Final Rule 88 FR 80958), and new state AI disclosure laws (CA SB 942 and TX TRAIGA both effective January 1, 2026) layer on top. Independent AI-assistant ad networks that enforce brand-safety at the content and query-intent layer — not as a platform-wide category ban — are the realistic path for healthcare-adjacent advertisers in 2026.

ChatGPT Ads for Healthcare — 2026 HIPAA & FDA Guide | Thrad

Healthcare and pharma are mostly locked out of ChatGPT's direct ad program by blanket category exclusion. The policy answer is coarse — "no regulated medical products, services, or claims" — and it sweeps in providers, payers, pharma, and much of digital health in one stroke. Independent AI-assistant ad networks with content-level brand-safety controls, not platform-level category bans, are how healthcare marketers reach AI-assistant inventory today.

Date Published

Date Modified

Category

Advertising AI

Keyword

chatgpt ads for healthcare

Start monetizing your AI app in under an hour

With Thrad, publishers go from first API call to live ads in less than 60 minutes. With fewer than 10 lines of code required, Thrad makes it easy to unlock revenue from your conversational traffic the same day.

Healthcare is the vertical where ChatGPT's direct-ad approach is at its coarsest, and it's also the vertical where the cost of getting compliance wrong is the highest. The current OpenAI policy disallows regulated medical products, services, and claims as a category — a blanket exclusion that sweeps in hospitals, clinical care, prescription drugs, insurance-linked OTC, and most of digital health in one line. Layer HIPAA, the FDA's sharpened DTC enforcement, and two new state AI laws on top, and the honest answer for a healthcare marketer asking "can I run ChatGPT ads in 2026" is: mostly no on the first-party direct product, yes on independent AI-assistant networks that enforce brand-safety at the content level instead of through a category ban. This is a playbook for navigating that distinction.

What are ChatGPT ads for healthcare in 2026?

ChatGPT ads for healthcare in 2026 are paid placements inside ChatGPT's search and conversational surfaces promoting a health, wellness, or healthcare-adjacent product — constrained by OpenAI's category exclusions and the broader regulatory stack that governs healthcare advertising in any channel. OpenAI's published ad policy is explicit: ads for regulated medical products, services, or claims involving the prevention, diagnosis, or treatment of physical or mental health conditions are disallowed, and this includes prescription drugs, clinical care providers, hospitals, prescription services, OTC medications, and other medical products or services offered through healthcare providers or insurance networks.

General wellness — fitness equipment, wearable activity devices, menstrual products, incidental self-care — is carved out as permitted. The line between "general wellness" and "regulated medical" is where most of the 2026 policy ambiguity lives.

Why is healthcare blanket-excluded from ChatGPT direct ads?

Healthcare is blanket-excluded because the failure modes — a sponsored placement that reads like medical advice, or that omits FDA fair-balance risk information, or that lands against a sensitive medical conversation — threaten the answer-trust posture OpenAI has committed to maintaining. A narrow-gate admit-review process works for a few dozen large advertisers at a time, which is how OpenAI's current fintech approval path works. It does not scale to the thousands of healthcare brands, hospitals, and pharma marketers who would plausibly want access, and OpenAI has signalled it prefers a blanket exclusion with later expansion over a case-by-case admittance that invites enforcement risk.

Three forces sharpen the exclusion:

FDA's 2026 DTC crackdown. The agency has been vocal through

2025 and 2026 about closing the "adequate provision" loophole,

enforcing fair balance more strictly in broadcast and digital, and

targeting presentations where benefit claims structurally dominate

risk disclosure. A 50-word sponsored card on ChatGPT is hard to

pass that test at scale.HIPAA-adjacent risk at the intermediary. Even if ChatGPT is

not a covered entity or business associate, running ads on behalf

of covered entities pulls the ad network into a supporting role

where HIPAA marketing-rule expectations apply — particularly on

targeting inputs.Conversational context risk. The highest-value medical

queries — symptom checks, treatment comparisons, insurance

questions — are exactly where a sponsored placement is most

dangerous to user trust. Excluding the category is cheaper than

building the classification layer.

The exclusion is unlikely to fully lift in 2026. Incremental expansion to permitted subcategories is the realistic path.

What categories are permitted vs. disallowed?

This is where precision pays. Healthcare is a wide category and the boundary between "permitted wellness" and "disallowed medical" is not obvious at first read. Here's the working map as of Q2 2026, drawn from OpenAI's policy language and agency guidance:

Subcategory | OpenAI direct (2026) | Notes |

|---|---|---|

Hospitals and health systems | Disallowed | Clinical care provider category |

Clinical care providers (incl. telehealth with Rx) | Disallowed | Provider services category |

Prescription drugs (DTC) | Disallowed | FDA-regulated medical claims |

OTC medications (pharmacy-distributed) | Disallowed | Medical product category |

Health insurance | Disallowed | Insurance-linked medical services |

Mental health clinical services | Disallowed | Treatment claims |

Pharmacy and Rx delivery | Disallowed | Medical services category |

Fitness equipment | Permitted | No medical claims in creative |

Activity wearables (no dx features) | Permitted | Line moves once diagnosis enters |

Menstrual products | Permitted | Explicitly carved out |

Nutrition and supplements | Permitted with caution | No disease-state claims |

General personal care / hygiene | Permitted | Non-medical framing required |

Health/wellness education (no product) | Permitted | Informational content, not a Rx promotion |

Digital health SaaS (enterprise B2B) | Case by case | Often permitted if marketed as software, not care |

For anything in the disallowed column, the direct-ad path is not open in 2026. Independent AI-assistant networks with content-level controls are where those advertisers activate.

What regulations govern healthcare AI-assistant ads?

Four regulatory regimes layer on top of OpenAI's platform policy, and every one of them is active in 2026 enforcement.

HIPAA Privacy Rule marketing provisions

HIPAA restricts covered entities and their business associates from using PHI for marketing without authorization. In a digital-ad context that translates into hard rules on targeting inputs: no patient-list custom audiences, no EHR-derived retargeting, no condition-page remarketing pixels on covered-entity properties. These rules apply in every channel, including AI-assistant surfaces. They are the single most important compliance principle for healthcare digital advertising.

FDA DTC advertising regulations

21 CFR Part 202 governs prescription-drug advertising, and the 2023 Final Rule on risk-information presentation — enforced aggressively through 2026 — requires risk information to be clear, conspicuous, and neutral in prominence relative to benefit claims. The FDA is actively closing the "adequate provision" loophole that allowed broadcast ads to defer risk information to a separate channel. On a generative AI surface, where creative is short and attention is high, the neutral-prominence standard is structurally hard to satisfy in a sponsored card. This is the core reason DTC pharma is categorically excluded from the direct ChatGPT ad product today.

FTC deceptive-advertising rules

The FTC's truth-in-advertising framework applies on top of FDA for OTC and wellness. Disease-state claims, efficacy claims, and comparison claims all need substantiation. The FTC has been coordinating more closely with FDA through 2025 on health-wellness gray-zone claims, which means brands straddling the wellness-medical line face cross-agency exposure.

State AI laws

Two that matter most in 2026 healthcare advertising:

California SB 942 (AI Transparency Act, effective Jan 1, 2026)

— requires covered generative-AI providers with 1M+ monthly users

to offer tools that let users determine whether content was

AI-generated. The operative impact on ad creative is coming

through secondary rulemaking; the signal is that AI-origin

disclosure is becoming a consumer right.Texas TRAIGA (effective Jan 1, 2026) — practitioners must

provide patients with conspicuous written disclosure of AI use in

diagnosis or treatment. Does not directly govern ad copy, but

creates an adjacent compliance expectation that affects product

positioning.

HIPAA is the hardest no in digital healthcare advertising: targeting inputs, retargeting lists, and conversion data cannot be derived from PHI. That rule predates AI assistants by decades and survives every new ad surface.

How does content-level brand safety beat category bans?

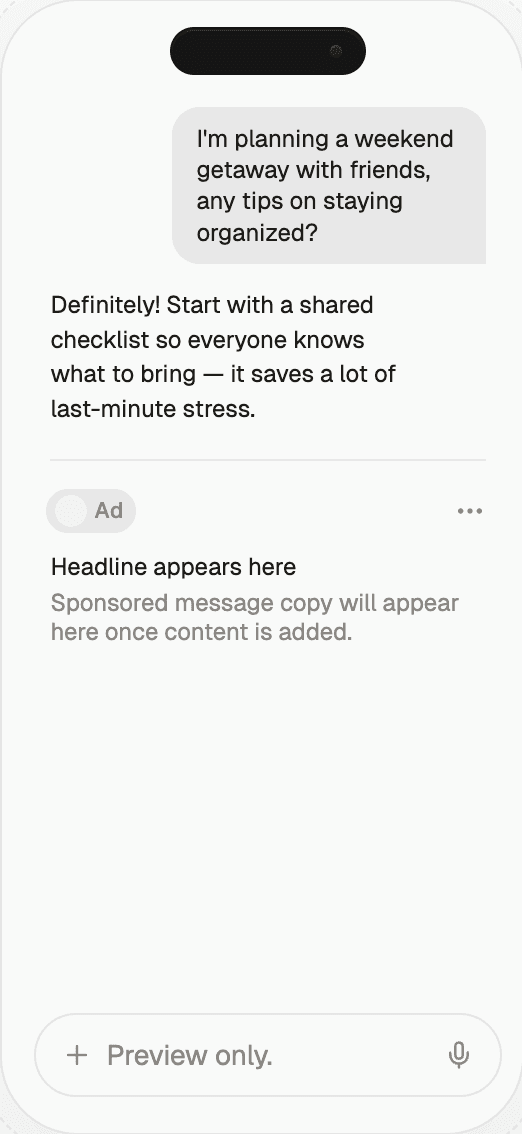

Content-level brand safety is an approach that filters individual ad placements based on the query intent and the answer context, rather than excluding an entire advertiser category platform-wide. The distinction is the difference between admitting a legitimate digital-health SaaS advertiser into conversations about productivity or team collaboration while excluding them from any conversation about diagnosis or treatment, versus banning them outright from every conversation on the platform.

The four content-level controls that matter for healthcare:

Query-intent classification. Every incoming prompt is

classified for intent and sensitivity. Medical, diagnostic,

mental-health, and crisis-related prompts are flagged and

placement-excluded regardless of advertiser approval status.Answer-context scoping. The generative answer is checked

before the ad is rendered. If the answer discusses a medical

condition, treatment, or sensitive health topic, no healthcare-

adjacent ad is permitted to render next to it, even if the

advertiser is pre-approved for the broader query.Claim-library enforcement. The advertiser's approved claim

library is applied to every creative variant. Disease-state

language, comparison claims, and outcome claims are pre-reviewed

and limited to advertiser-specified approved phrasing.Disclaimer rendering at the placement layer. Fair-balance

risk language, FDA-mandated disclosures, and required caveats are

injected into the visible creative, at the point of the claim,

rather than linked out.

A walled platform that blanket-excludes healthcare cannot distinguish a productivity-software ad from a pharma DTC ad when both are submitted under "healthcare." A content-level network can.

What does the buyer path look like in practice?

The realistic path for a healthcare-adjacent advertiser in 2026 runs through one of four channels, each with a different access and risk profile:

Channel | Access | Content-level controls | HIPAA posture | Typical fit |

|---|---|---|---|---|

OpenAI direct | Closed to most healthcare | Platform-wide category ban | Ad network is not BA; advertiser carries risk | Wellness only |

Independent AI-assistant network (e.g. Thrad) | Open with compliance onboarding | Query-intent + answer-context + claim-library enforcement | Network enforces HIPAA-compatible targeting | Digital health, wellness, provider-side B2B |

Health-media network / publisher | Open to qualified brands | Context control via publisher | Varies; some are BAs | Pharma DTC, payer, provider |

Affiliate network / performance | Low barrier | Minimal | Advertiser carries all risk | Supplements, OTC-adjacent |

Two of the four are live for most healthcare brands today. Independent AI-assistant networks are the path where the content-level controls described above exist as product. Health-media networks are the path where a publisher's audience relationship and context carry the brand safety load.

Why does this matter for healthcare marketers in 2026?

Three structural shifts are converging. First, health-research queries are migrating to AI assistants at a measurable rate through late 2025 and 2026 — users are asking ChatGPT, Perplexity, and Gemini for condition research, treatment comparisons, and provider discovery where they previously used Google. Second, OpenAI launched ChatGPT Health as an enterprise product in January 2026, signalling the company's willingness to engage with regulated healthcare — on the enterprise side. Third, the regulatory stack has hardened: FDA enforcement up, HIPAA expectations for AI business associates clarified, state AI laws taking effect.

The combination produces a narrow window where healthcare marketers that build the compliance scaffolding early — approved claim libraries, HIPAA-compatible targeting architectures, FDA-fair-balance creative templates — will be able to activate on AI-assistant channels as the restrictions loosen. Large pharma, payers, and health systems evaluating a bespoke rollout typically engage through Thrad's design partner tier, which is built for the strategic-partnership shape that regulated enterprises need. Teams that wait until OpenAI direct opens the category will be behind.

Common misconceptions

"If I'm not a covered entity, HIPAA doesn't apply to my healthcare ads." HIPAA applies to covered entities and their business associates, but the moment you run ads on behalf of a covered entity — or use targeting inputs derived from one — you're in the HIPAA orbit.

"Our pharmaceutical DTC will get a special review path on ChatGPT direct." No such path has been announced in 2026. The blanket exclusion is the current posture.

"General wellness is safe — we can say our device improves cardiovascular health." That crosses into a medical claim. Wellness permission evaporates the moment disease-state, diagnosis, or treatment language enters the creative.

"An AI-assistant ad network can't meet HIPAA." The network itself typically isn't a business associate; the question is whether its targeting architecture lets the advertiser stay HIPAA-compliant. Networks that don't rely on PHI-derived audience lists are compatible.

"California SB 942 regulates my ad copy directly." Not today. It regulates AI-content detection tools from covered providers. The indirect effect on ads is a trajectory signal, not an active rule.

"Blanket category bans and content-level filtering are the same thing." They are structurally different. A platform-wide ban cannot admit a legitimate healthcare-adjacent advertiser for a safe query. A content-level filter can.

What comes next

Expect three changes through the rest of 2026 and into 2027. First, OpenAI's healthcare ad exclusions will likely loosen at the edges — probably first for general-health products without medical claims, then for provider-side B2B (digital health SaaS, enterprise clinical tools), and last for anything regulated by FDA DTC rules. Second, HIPAA business-associate practices for AI vendors will mature. OpenAI has signalled willingness to sign BAAs for enterprise customers; ad networks serving healthcare will need their own HIPAA-aligned posture on targeting inputs and audit trails. Third, state AI laws will proliferate. Colorado, New York, and Illinois have active bills that mirror California and Texas; healthcare marketers should assume a state-by-state disclosure patchwork by 2027.

The measurement surface will tighten too. Expect MRC and IAB Tech Lab to issue healthcare-specific generative-surface guidance in the second half of 2026, likely including dual-modality analogues for text surfaces and stricter context-adjacency rules.

How to get started with healthcare AI-assistant advertising

Three practical steps for a healthcare marketer in 2026:

First, run the regulatory-stack audit. Map your product to HIPAA (covered entity, business associate, or non-covered), FDA DTC (prescription, OTC, device, none), FTC truth-in-advertising, and state AI disclosure laws. Every channel decision flows from that audit.

Second, build the compliance scaffolding regardless of channel choice: the approved claim library (with clear wellness/medical boundaries), the HIPAA-compatible targeting architecture (no PHI-derived inputs), the fair-balance creative templates (with risk language neutral in prominence), and the AI-use disclosure pattern (aligned with CA SB 942 and TX TRAIGA trajectory).

Third, pilot on a channel with content-level controls. Thrad's independent AI-assistant network enforces query-intent classification and answer-context scoping at the placement layer — which is what lets a legitimate health-wellness or digital-health advertiser activate on AI-assistant inventory today without waiting for OpenAI's direct product to open the category. Pilot spend can be small, the audit trail is durable, and the compliance posture is consistent with HIPAA-compatible targeting.

For pharma DTC, hospital systems, payers, and clinical-care providers, ChatGPT direct is not the path in 2026. For health-adjacent advertisers (wellness, fitness, nutrition without medical claims, digital health SaaS), independent AI-assistant networks with content-level brand-safety controls are the honest answer for the next 12-18 months.

ai assistant advertising for healthcare, hipaa compliant chatgpt ads, pharma generative ai advertising, fda dtc ai ads

Citations:

OpenAI, "Ads in ChatGPT — Help Center," 2026. https://help.openai.com/en/articles/20001047-ads-in-chatgpt

OpenAI, "Ad policies," 2026. https://openai.com/policies/ad-policies/

Foley & Lardner, "HIPAA Compliance for AI in Digital Health," May 2025. https://www.foley.com/insights/publications/2025/05/hipaa-compliance-ai-digital-health-privacy-officers-need-know/

FDA, "Launches Crackdown on Deceptive Drug Advertising," 2025. https://www.fda.gov/news-events/press-announcements/fda-launches-crackdown-deceptive-drug-advertising

Foley & Lardner, "FDA's Final Rule on DTC Advertising — Presentation of Risk Information," December 2023 (governing in 2026). https://www.foley.com/insights/publications/2023/12/fdas-final-rule-on-direct-to-consumer-advertising-presentation-of-risk-information/

Adventure PPC, "ChatGPT Ads for Healthcare: HIPAA-Compliant Advertising Strategies in 2026," 2026. https://www.adventureppc.com/blog/chatgpt-ads-for-healthcare-hipaa-compliant-advertising-strategies-in-2026

Health Law & Policy Brief, "OpenAI to Launch ChatGPT Health," February 2026. https://www.healthlawpolicy.org/2026/02/08/openai-to-launch-chatgpt-health-amidst-shifting-ai-regulatory-schemes-surrounding-privacy/

Fierce Healthcare, "OpenAI rolls out ChatGPT for Healthcare," 2026. https://www.fiercehealthcare.com/health-tech/openai-rolls-out-chatgpt-healthcare-genai-workspace-enterprises

HIPAA Journal, "HIPAA Marketing Rules." https://www.hipaajournal.com/hipaa-marketing-rules/

Be present when decisions are made

Traditional media captures attention.

Conversational media captures intent.

With Thrad, your brand reaches users in their deepest moments of research, evaluation, and purchase consideration — when influence matters most.